|

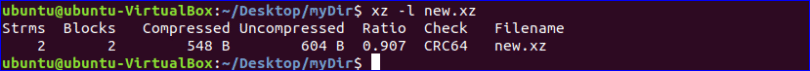

Is there some trick to fix it or is EPEL7 unsupported in SUSE Manager 2.1? I only found this thread getting EPEL 7 working on Spacewalk 2.1, but I couldn’t find a matching pyliblzma package. _decompress_chunked(filename, out, ztype)įile "/usr/lib64/python2.6/site-packages/yum/misc.py", line 742, in _decompress_chunked Return self._groupLoadRepoXML(text, self._mdpolicy2mdtypes())įile "/usr/lib64/python2.6/site-packages/yum/yumRepo.py", line 1418, in _groupLoadRepoXMLįile "/usr/lib64/python2.6/site-packages/yum/yumRepo.py", line 1402, in _commonRetrieveDataMDįile "/usr/lib64/python2.6/site-packages/yum/misc.py", line 1096, in decompress RepoXML = property(fget=lambda self: self._getRepoXML(),įile "/usr/lib64/python2.6/site-packages/yum/yumRepo.py", line 1452, in _getRepoXMLįile "/usr/lib64/python2.6/site-packages/yum/yumRepo.py", line 1442, in _loadRepoXML XZ can be 5-10x faster to compress than Brotli, especially at the highest compression level. Or, if you care a lot about compression (not decompression) speed. If 'primary' in :įile "/usr/lib64/python2.6/site-packages/yum/yumRepo.py", line 1461, in So, you would only benefit from using XZ: If the best available alternative is Gzip Or, if youre serving very large bundles of bytecode. xzdectest allocates a char device major dynamically to which one can write.

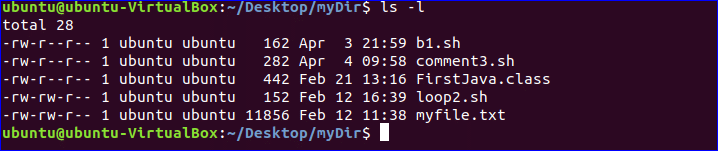

xzdectest is not useful unless you are hacking the XZ decompressor. The xzdectest module is for testing xzdec. Self.updateChannelChecksumType(plugin.get_md_checksum_type())įile "/usr/lib64/python2.6/site-packages/spacewalk/satellite_tools/repo_plugins/yum_src.py", line 189, The usage of the xzdec module is documented in include/linux/xz.h. var/log/rhn/reposync/epel7-x86_64.log hasįile "/usr/lib64/python2.6/site-packages/spacewalk/satellite_tools/reposync.py", line 173, in sync I added the channel and repository as usual, but syncing fails because of xz compression. We have Suse Manager Expanded Support for Red Hat / CentOS support. The strategy of ignoring exit status is valid, but it also gives us freedom to make changes to the exit code without disturbing that strategy.I’m trying to add an EPEL7 channel in SUSE Manager 2.1 for CentOS 7 hosts that I’ll maintain. > won't get more issues reported, because nobody sees these missed > shouldn't be ignored? I assume if you just ignore everything, then you I tried reinstalling a few times and I get the same problem. Using a complex format for long-term archiving would be a bad idea even if the format were well-designed, which xz is not. To begin with, xz is a complex container format that is not even fully documented.

> should there be distinct error codes for errors to ignore, and errors which As soon as I do a successful yum install epel-release (epel-release-7-6 or epel-release-7-8) on a fresh Centos7 installation then any subsequent yum update or yum install end with the error message xz compression not available. There are several reasons why the xz compressed data format should not be used for long-term archiving, specially of valuable data. (In reply to Matthias Klose from comment #6) debug_info (due to an executable not being compiled with -g) is not worth exiting with 1 for. In this case, the encoding, errors and newline arguments must not be provided. This command compresses the file data. Also included is a file interface supporting the. Jakub, WDYT? We could argue that not having. Except for the program name, the usage is identical to gzip: xz -v data.csv. However, before the 815ac61 commit, if we did the same in multifile mode, we got an exit status of 0:ĭwz: Too few files for multifile optimizationĪfter the 815ac61 commit, we get an exit status of 1, just as in regular mode: Notices (not warnings or errors) printed on standard error dont affect the. debug_info section, we get an exit status of 1:ĭwz: verilator_bin. xz, unxz, xzcat, lzma, unlzma, lzcat - Compress or decompress.

This is fallout of 815ac61 "Honour errors when processing more than one file". (In reply to Matthias Klose from comment #3)ĭwz: 2: DWARF compression not beneficial - old size 44630638 new size 44641594

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed